STRANGE + WONDERFUL

RECENT STORIES

15 of the Most Quotable Movies Ever

One of the most fun things you can do in life is quote your favorite movies. It’s simple and seems silly, but my sense of humor is inherently tied to repeating my favorites. My family and I often chuckle using these in everyday conversations. Every generation has its…

25 Unforgettable Movies Adapted From Books

Most of the time, the book is going to be better than the movie. Film adaptations of popular novels often leave out important scenes,…

The Most Watched Movies On Streaming

Watching movies from home has never been easier. Thanks to the advancements of streaming services, moviegoers can enjoy the latest and greatest Hollywood films…

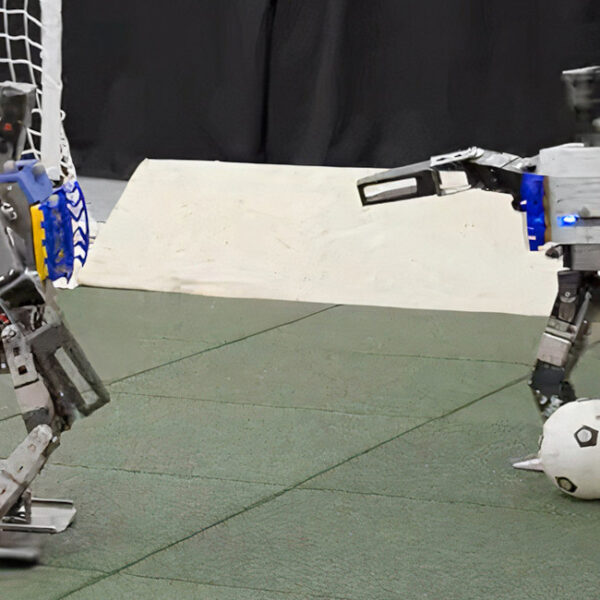

11 Songs That Never Fail To Bring Happiness

Music has brought joy to people’s lives for as long as we can remember. A perfect song can change our mood for many reasons.…

14 Harmful Things Being Taught To Children

Children are our future, and it’s important to raise them right. However, some people feel they’re not being taught the right things. Here are…

15 Easy Ways To Sleep Better At Night

Getting a good night’s sleep could be the secret to being productive and having a positive attitude the following day. Still, many don’t get…

12 Unforgettable Female Characters From Movies and Television

TV shows and movies are filled with unexceptional or depthless female characters. Many on-screen women are able to stir something amazing in us. Daring,…

The Best Plot Twists In Gaming History

The best video games feature incredible stories that rival the best from movies and television. These narratives are able to keep players hooked for…