STRANGE + WONDERFUL

RECENT STORIES

15 Best U.S. Hot Spots for Retail Therapy

Sometimes, a little retail therapy is all a person needs to lift their mood, and it’s even better if it’s done out of town. A short trip to another city for some shopping can be enough of a mood booster to get anyone through the next month or…

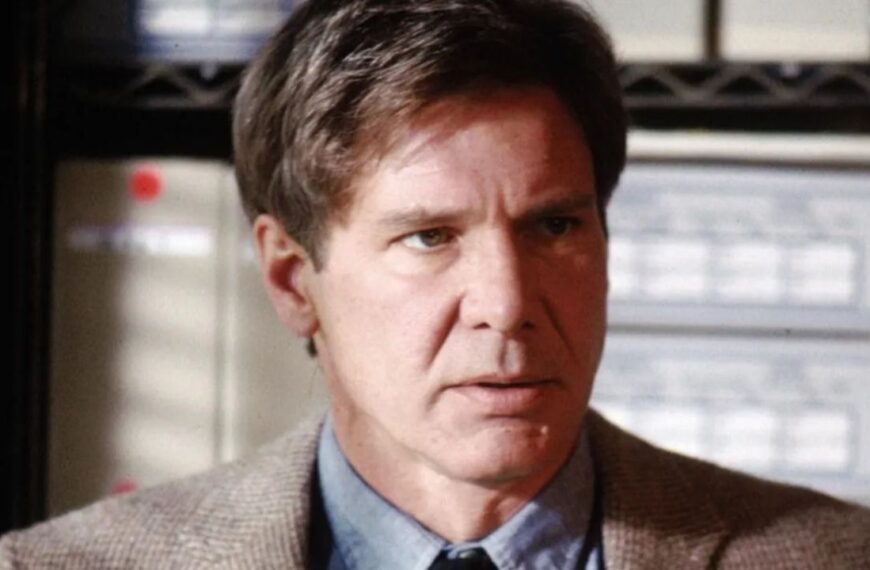

12 Plot Holes With Reasonable Explanations

Most of the time, plot holes are an unfortunate part of the movie-watching experience. They drag viewers out of their immersion and can sometimes…

15 Times Where The Bad Guy Wins

More often than not, movies end with the good guys victorious over the bad guys. It’s a tried and true formula that delivers the…

15 Budget-Friendly Ways to Prioritize Self-Care

A big, new industry has built up around self-care, and it’s great to see there are options for those prioritizing their physical and mental…

15 of the Funniest Sitcoms To Ever Air on TV

The first television sitcom aired in 1947 and TV has been delighting television viewers ever since. Originally, sitcoms were focused on wholesome values and…

Popular Movie One-Liners We Love To Use In Everyday Life

Iconic movie quotes have become just as memorable as the movies themselves. While engaging plots or beloved characters can stick with us long after…

15 Ways to Beat Insomnia When You’ve Tried Everything

Getting a good night’s sleep could be the secret to being productive and having a positive attitude the following day. Still, many don’t get…

13 Beliefs Older Generations Now Embrace Thanks to Life’s Lessons

They say with age comes wisdom and that hindsight is 20/20. If you knew then what you know now, well, things might have been…