STRANGE + WONDERFUL

RECENT STORIES

Outdoor Adventures: 15 Health Benefits of Getting Back to Nature

Many of us struggle during the long winter months. We want to get outside and enjoy fresh air, but the cold and fewer hours of daylight deter us. With warmer temperatures on the way, it’s a good idea to remind ourselves of the health benefits of getting outdoors.…

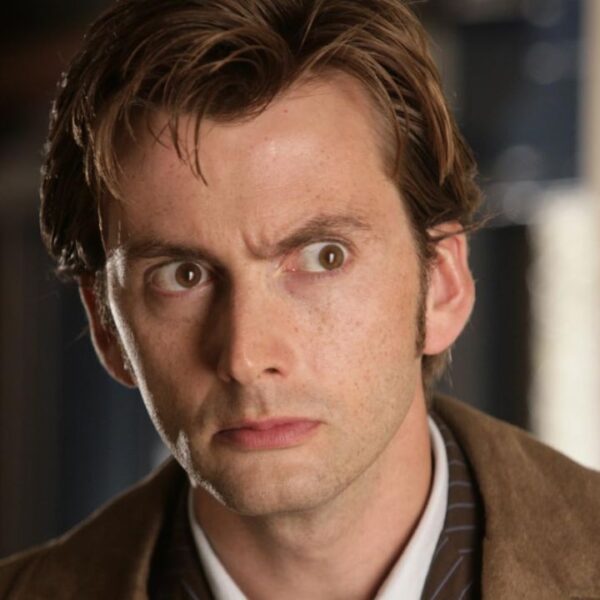

The Best David Tennant Roles

There’s just something about David Tennant that makes you sit up and take notice when he’s on the screen. It doesn’t matter if he’s…

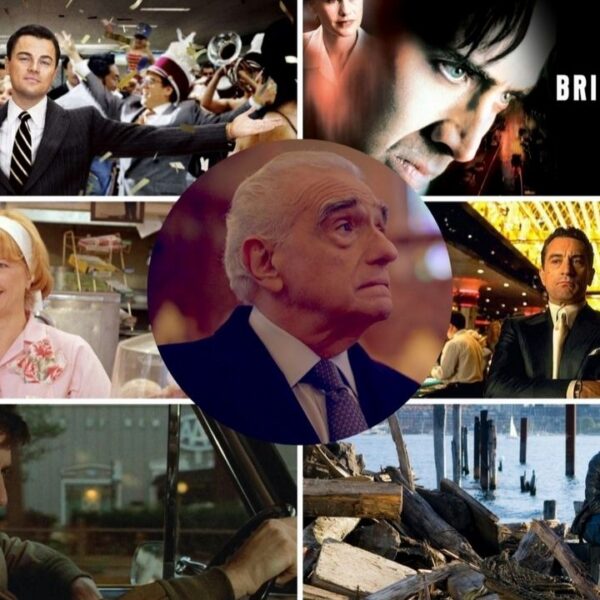

The Best Martin Scorsese Movies

Martin Scorsese has had one of the most illustrious careers in Hollywood. From his start making studio pictures to his pivot into imaginative biopics,…

15 British Actors That Can Play Americans Well

When I first moved to America, my accent stood out a lot, drawing admiration from many. Nobody told me I would have to fight…

Transform Your Morning Routine: 15 Habits for a Healthier Day

A great day starts with an effective morning routine, which prepares us for the challenges ahead. If you start sluggish, you are more likely…

The Truth Behind 15 Popular Superfoods: Do They Live up to the Hype?

We all want to be healthy and eat nutritious foods, and the media leads us to believe that superfoods significantly improve our health. Marketing…

Beloved 90s Comedies That We Never Get Tired Of

While you and I may view the 90s as something that happened 10-20 years ago, they actually began almost 25 years ago. Yet it…

15 Dangerous Things People Take Too Lightly

Today’s world has danger lurking around every corner. We’re not talking about the stories you see on the news, either. These are everyday issues…