STRANGE + WONDERFUL

RECENT STORIES

15 Things To Do Before Traveling Abroad – The Ultimate Checklist

You’ve booked your international flight, scored a unique Airbnb, and your anticipation is at a fever pitch. There’s nothing left to do than count down the days until the trip of a lifetime, right? Wrong. There are so many things you need to do. I’m here to help.…

Solo But Social: 15 Friendship Tips for Travelers in Their 40s

Adults often find it challenging to make new friends. This difficulty becomes even more pronounced during solo travel, especially for those dealing with social…

15 Surprising Things the Car You Drive Reveals About Your Personality

Ever wonder if your car reflects more than just your taste in vehicles? Sometimes, we read our astrology profile and think, wow, that sounds…

11 Essential Tips To Build A Stronger Financial Future

For many soon-to-be retirees, outliving their assets is one of their biggest fears. Retirement is a much-anticipated milestone for many, but the feeling isn’t…

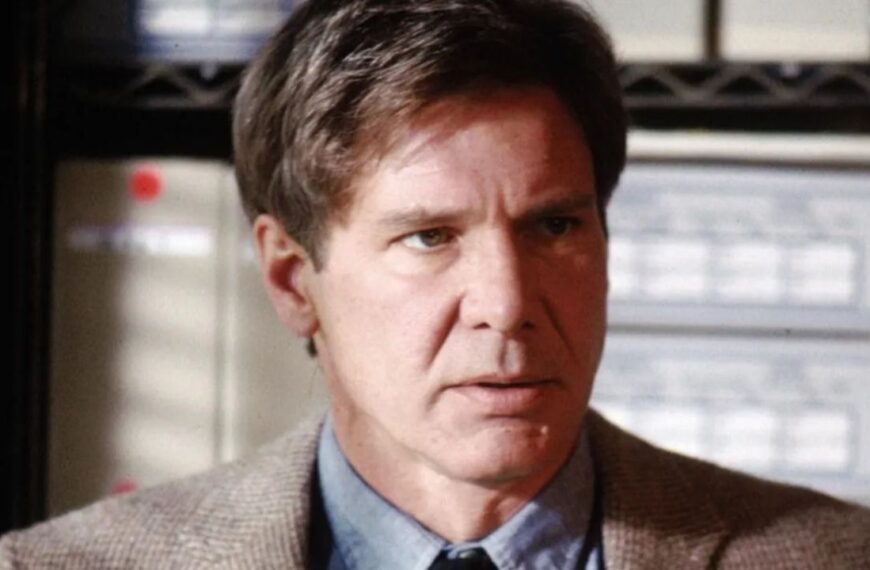

Karaoke Songs People Need To Stop Singing Immediately

Karaoke: it’s a timeless art that’s popular for a reason. Singing your favorite songs after a few drinks is a lot of fun! Yet…

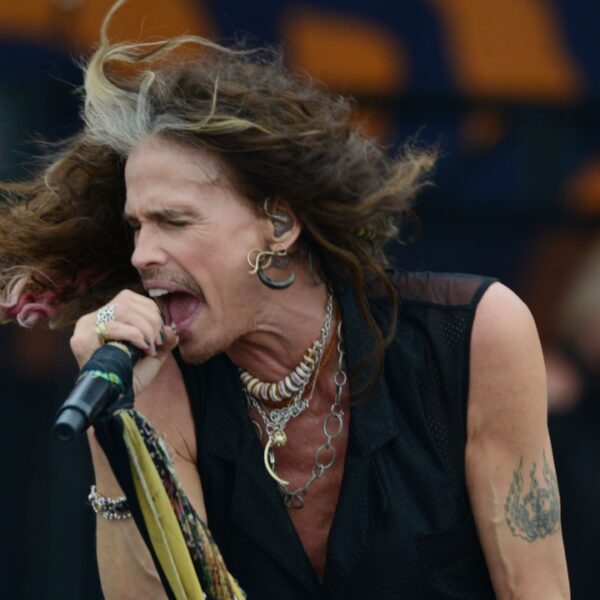

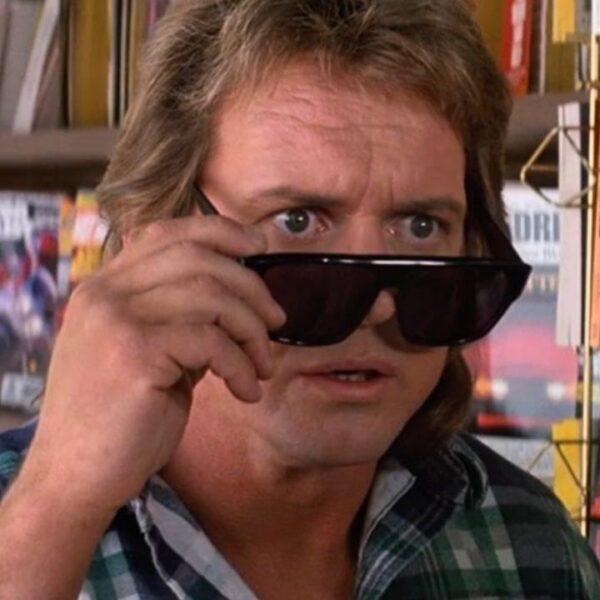

Sci-Fi Movies From the 80s With Cooler Tech Than We Do Today

Today, modern cinema is about one-upmanship. Science fiction, in particular, has been affected by the need to produce better computer-generated imagery (CGI) than its…

15 Vacation Destinations That Are Better Than Any Cruise in the World

While a cruise is a great vacation idea, ditch the potential seasickness, short stops at beautiful locations, and crowded ports for these lovely holiday…

15 Unique BBQ Traditions from Around the World To Try This Summer

In America, we’re accustomed to ribs, pulled pork, burnt ends, flavorful barbecue sauces, zesty dry rubs, and smokey flavors. However, there’s more to barbecue…